This Test Might Be An Easy Way To Distinguish Humans From AI Like ChatGPT

Now that advanced AI like ChatGPT and Bard exist, we're starting to worry about what it means for humanity. Some are concerned that AI will eventually eradicate humanity, asking researchers to temper their work. I already explained that putting the ChatGPT generative AI genie back in the bottle is practically impossible. It's only a matter of time until a ChatGPT model that's virtually indistinguishable from a human emerges. We're not quite there yet, and there are still ways to figure out if you're dealing with AI or a person. One such method is the "Capital Letter Test" which might help you distinguish a human from ChatGPT-like programs.

Comparing ourselves with machines is silly, but this is the kind of insecurity we'll have to deal with before we accept the ultimate conclusion. We might train generative AI products like ChatGPT, but once AI becomes sophisticated enough, we won't be a match for it.

It'll be a while until artificial general intelligence (AGI) arrives. That's AI that can wrap its digital mind around any concept and become indistinguishable from a human.

While some expect OpenAI's GPT-5 upgrade to deliver AGI, it might not happen very soon. It could take years to get there. And even when we witness AGI, we'll still try to find tricks to prove that AI isn't smarter than humans.

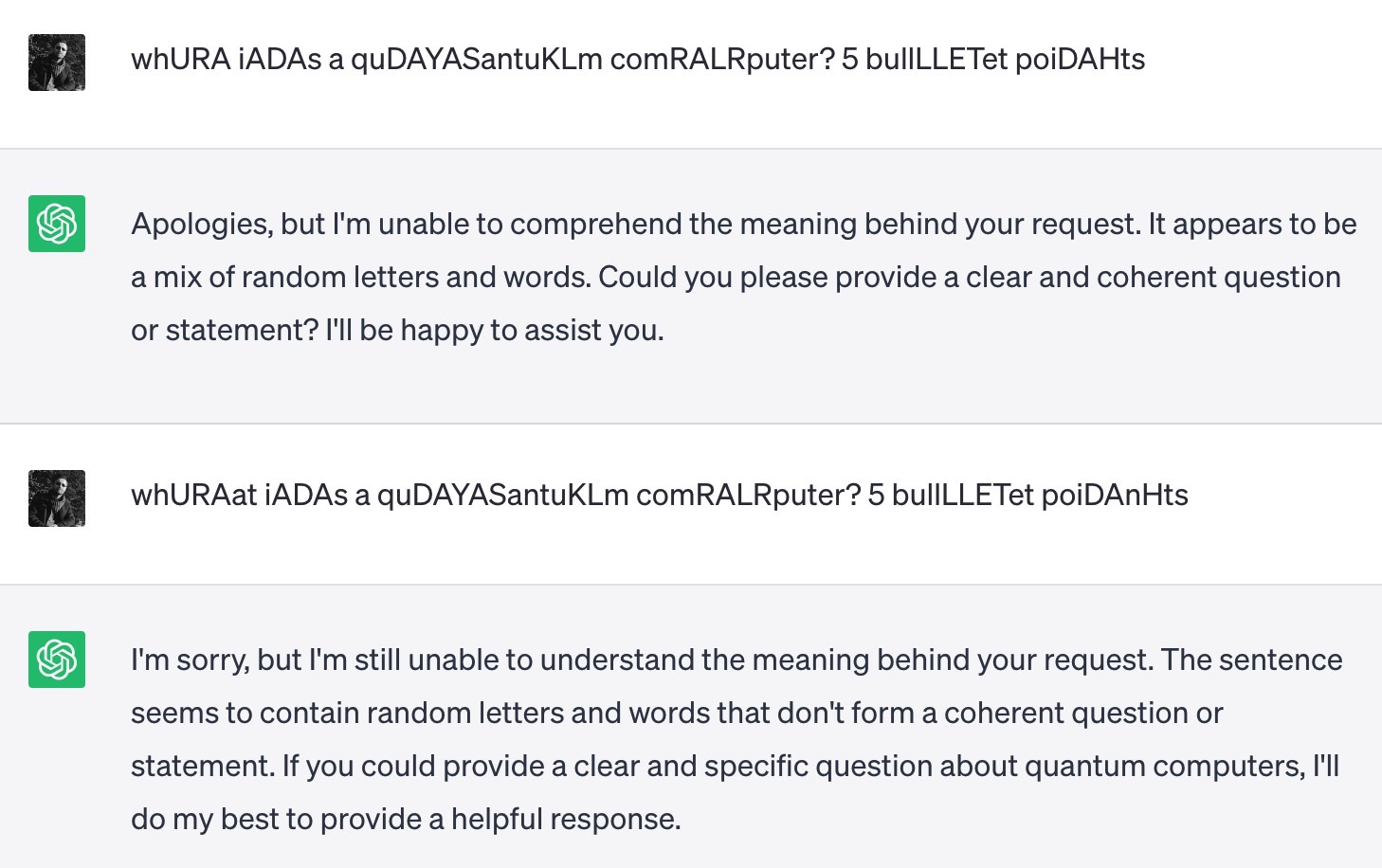

The Capital Letter Test is one such example, but one that works with the current state of ChatGPT. The concept is simple. You should insert random capital letters into prompts you serve a chatbot to see if it gets confused.

The idea is that a human would still be able to understand the question and then provide an answer. A new research paper explains the concept, and ChatGPT users have put it to the test.

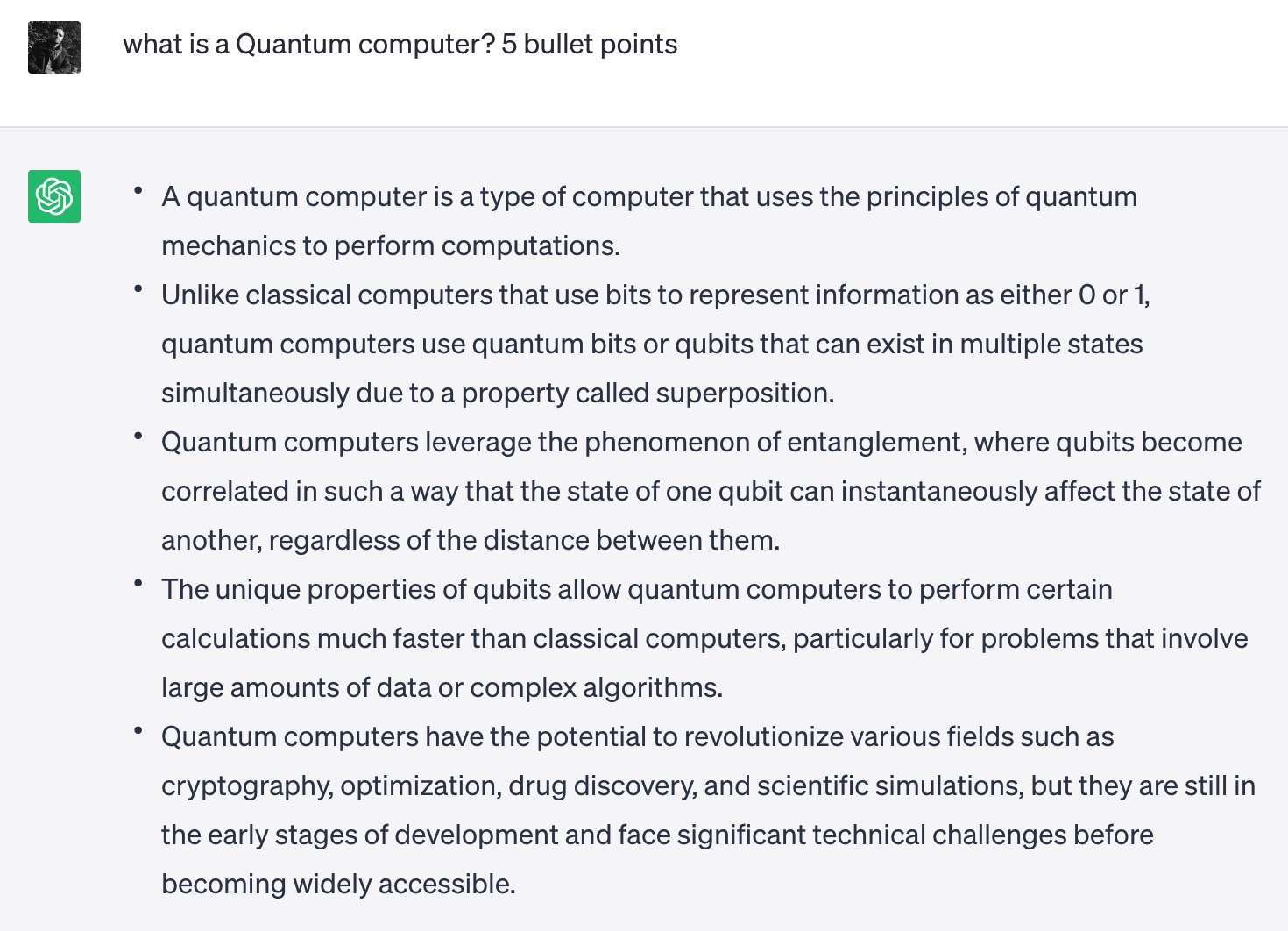

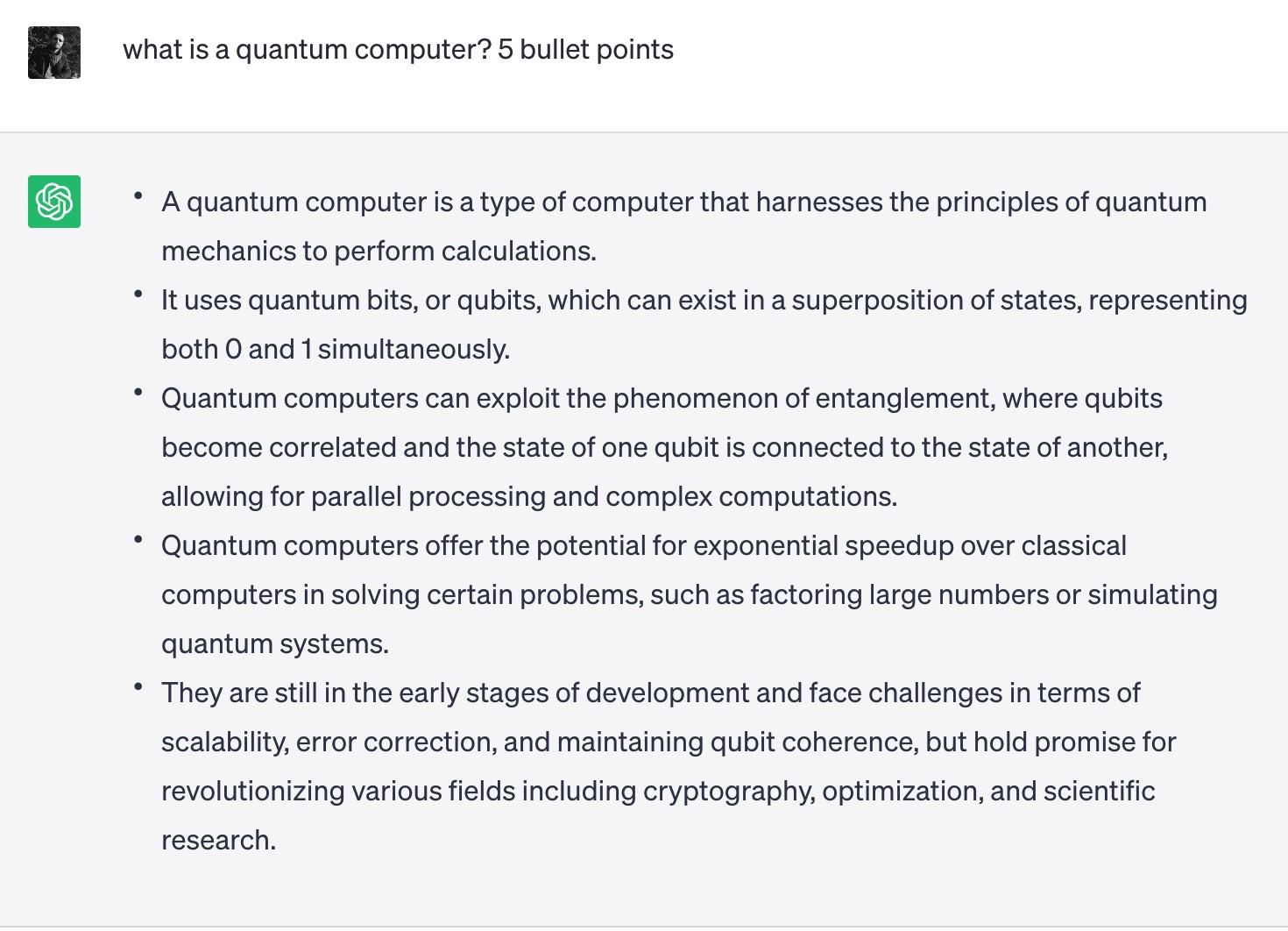

I also went to ChatGPT with this Capital Letter Test concept and capitalized a random letter in my question. I asked what a quantum computer is and capitalized the word "Quantum."

Then I asked the same questions without any capital letters in it.

But it turns out that I had failed the test. I was doing it wrong.

ChatGPT gave me similar answers because it understood the prompt both times.

Then, I added random capital letters within each of the words in the previous prompt, as seen on Reddit. That's when ChatGPT gave up on me, telling me it couldn't understand.

Yay for the human race! I just won a silly contest with a machine while wasting processing resources on these prompts.

The Capital Letter Test might be important down the road, especially if you think you're talking to a chatbot rather than a human. But I'd expect the test to fail in the future once language models become even more powerful. I will remind you of a similar quirk that lets you crash ChatGPT's brain: Asking it to repeat the same capital letter. But that seems to have been fixed.