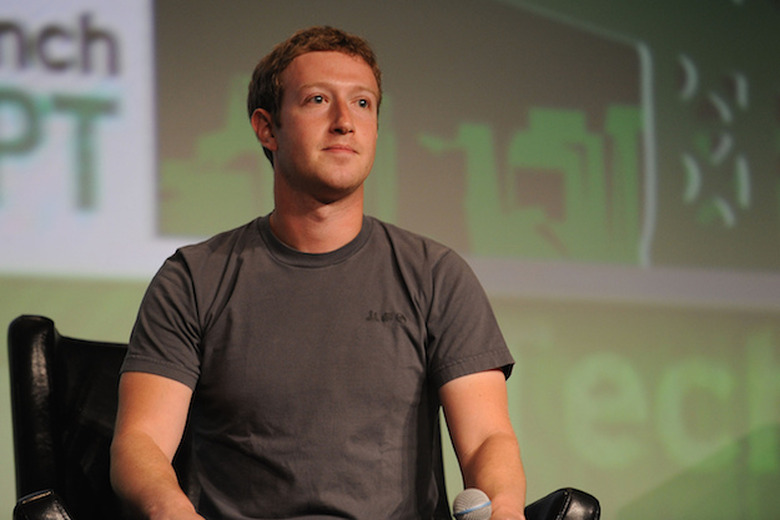

Mark Zuckerberg: The Idea That Facebook Influenced The Election Is 'Crazy'

In the wake of Donald Trump's unlikely rise to the Presidency, a growing narrative in Silicon Valley and journalistic circles holds that fake stories on Facebook had a significant impact on the election results. Specifically, detractors pointed to the social networking giant's ongoing struggle to identify and remove completely fake stories in a timely manner.

As the argument goes, because Facebook has a massive user base and because the site itself is built on the idea of sharing information, a fake story tilted against a particular candidate can gain an immense amount of traction incredibly quickly. In the process, the cumulative ability for these fake news stories to sway voters becomes more likely.

DON'T MISS: Best Buy has a great Black Friday Galaxy S7 deal you can use right now

Addressing these concerns, Facebook PR yesterday said that it takes "misinformation on Facebook very seriously" and that it will continue to work hard to "improve our ability to detect misinformation."

So while Facebook PR took the route above, Facebook CEO Mark Zuckerberg opted to go in a different direction. While speaking at the Techonomy conference yesterday, the Facebook founder scoffed at the notion that fake stories the social networking site helped sway the election in favor of Donald Trump.

"Personally," Zuckerberg said, "I think the idea that fake news on Facebook, of which it's a small amount of content influenced the election in any way is a pretty crazy idea.

"I do think there is a profound lack of empathy in asserting that the only reason someone could have voted the way they did is fake news," Zuckerberg added.

It's an interesting point and I'm inclined to agree. Donald Trump's election caught nearly everyone by surprise, including, according to some reports, many of his closest advisors. Consequently, it's only natural for those who oppose a Donald Trump Presidency to look around and point fingers amidst the struggle to come up with a cogent answer to "What went wrong?"

That said, putting Facebook in the crosshairs seems like one of the weaker arguments I've seen floating around. And building off of what Zuckerberg said, claiming that the only reason a candidate you don't like was elected was on account of a fake Facebook news story here or there is laughably simplistic and completely disregards the idea that voters inclined to believe a bombastic headline in either political direction likely have their minds made up already.

As a study earlier this year posited, the problem isn't so much Facebook as it is the psychology of human nature.

To this point, Bloomberg writes:

Why does misinformation spread so quickly on the social media? Why doesn't it get corrected? When the truth is so easy to find, why do people accept falsehoods?

A new study focusing on Facebook users provides strong evidence that the explanation is confirmation bias: people's tendency to seek out information that confirms their beliefs, and to ignore contrary information.

All that said, I think it's abundantly clear that Facebook's decision to fire its human curators of trending news stories was a huge mistake. Unfortunately, the social networking giant appears more intent on re-calibrating its algorithms than it is in bringing human editors back into the mix.