Google’s new Pixel 4 and Pixel 4 XL flagship smartphones were announced last week, and they’ll land on store shelves this coming Thursday, October 24th. In fact, they’ll land on more store shelves here in the United States than ever before thanks to new wireless carrier deals. Pixel phones have never sold anywhere near as well as flagships from top smartphone makers like Apple and Samsung, but they’re typically fan favorites among avid Android users who recognize the many benefits of owning a Pixel phone. A pure Android experience free of vendor bloat and an incredible camera are both near the top of the list, but immediate access to new Android updates trumps everything else. Simply put, the only way to guarantee that you always have the latest and greatest new Android features is to buy a Pixel phone.

With the release of the new Pixel 4 series fast approaching and Google’s review embargo having been lifted on Monday morning, there’s tons of coverage flying all around the web right now. Of course, the biggest question on everyone’s mind seems to be how the new Pixel 4 series stacks up to Apple’s iPhone 11, iPhone 11 Pro, and iPhone 11 Pro Max. This is a question we’ll continue to address, but there’s no better way to start than by highlighting some of the biggest differences between Google’s Pixel 4 phones and Apple’s iPhone 11 lineup.

Motion Sense

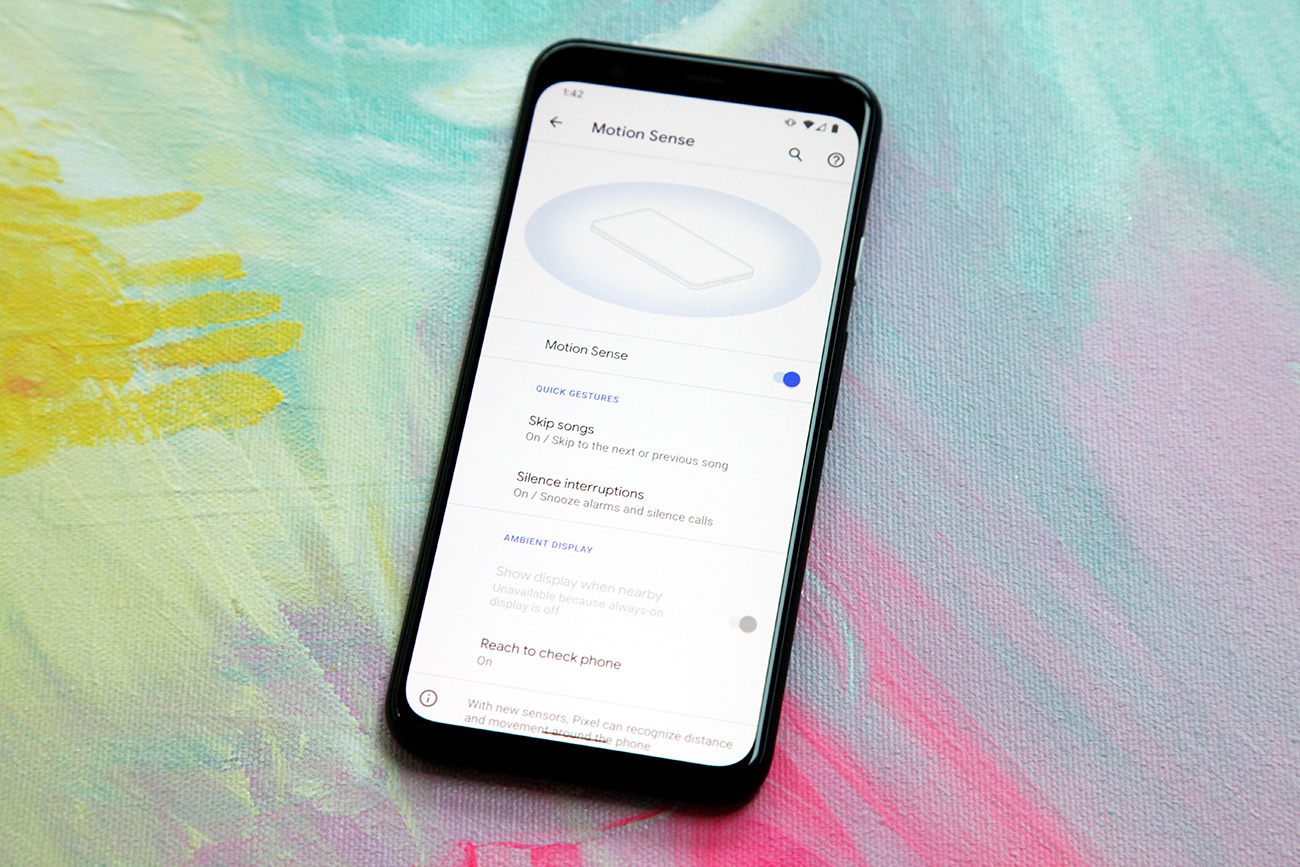

Google’s new Motion Sense feature powered by the Project Soli radar chip is by far the most revolutionary new feature in the Pixel 4 and Pixel 4 XL. Is it actually all that useful? Well, that’s open to debate.

We covered this a bit in our earlier Pixel 4 coverage, but Project Soli at this point is basically Google’s equivalent of 3D Touch in Apple’s iPhones. There’s no question whatsoever that it’s a revolutionary technology, but there’s also no question that all of the functions it performs right now — could easily be replaced by existing tech like the front-facing camera and the accelerometer.

In a nutshell, Project Soli is a tiny radar chip in the Pixel 4 and Pixel 4 XL that can sense motion around the device. This way, users can wave a hand to skip songs or snooze alarms. Additionally, this tech allows the Pixel 4 to turn off the always-on display feature if no one is near the phone, and it turns the screen on when someone reaches for the phone so it can activate face unlock. That’s it. If you’re saying to yourself, “there are already phones that do all of those things,” you’d be correct, and yet none of them have a radar sensor.

Apple took five years to develop 3D Touch and then abandoned it after just four. Hopefully Google is cooking up some novel functionality to be rolled out in the future, otherwise Projecto Soli might suffer the same fate.

Recorder with transcription and search

The Pixel 4’s new Recorder app doesn’t use actual magic to instantly transcribe speech, but I’m not sure I would argue with Google engineers if they said it does. This phenomenal new app works just like other Recorders you might find on other smartphones, but it transcribes speech into text in real-time as it records. What’s more, since all of your recordings will have text attached to them, you can search by keyword in the app to find the recording you’re looking for.

It’s awesome — and unlike most impressive Google services, it all happens on the device so nothing is sent to Google’s servers.

Live Caption

Live Caption is an awesome new feature on the Pixel 4 that uses the same backend technology as the Recorder app’s real-time transcription feature. Instead of transcribing speech as you record it, however, this awesome feature adds captions to any video playing on your phone or streaming to your phone. I would like to see Google add support for Live Caption during video calls as well, but this feature is still pretty terrific as-is.

6GB of RAM

RAM typically isn’t much of an issue for iPhones since the platform is optimized so well. This year, however, things are a bit different. As was the case with iOS 11, iOS 13 has some problems with RAM management that have been impacting real-life performance. Perhaps if Apple’s iPhones had 6GB like the Pixel 4 instead of just 4GB, this wouldn’t be such an issue.

Universal back gestures

This might seem insignificant if you’re a lifelong Android user, but the fact that the back gesture isn’t universal on the iPhone is infuriating at times. Some apps support the back swipe and others don’t. Some apps have a back button in the top-left corner and others don’t. It’s ridiculous, especially on a mobile platform that is generally pretty good with consistency. On the Pixel 4 and Pixel 4 XL, you can swipe in from the left or the right side of the screen to go back. It works everywhere, all the time. Thank you, Google.

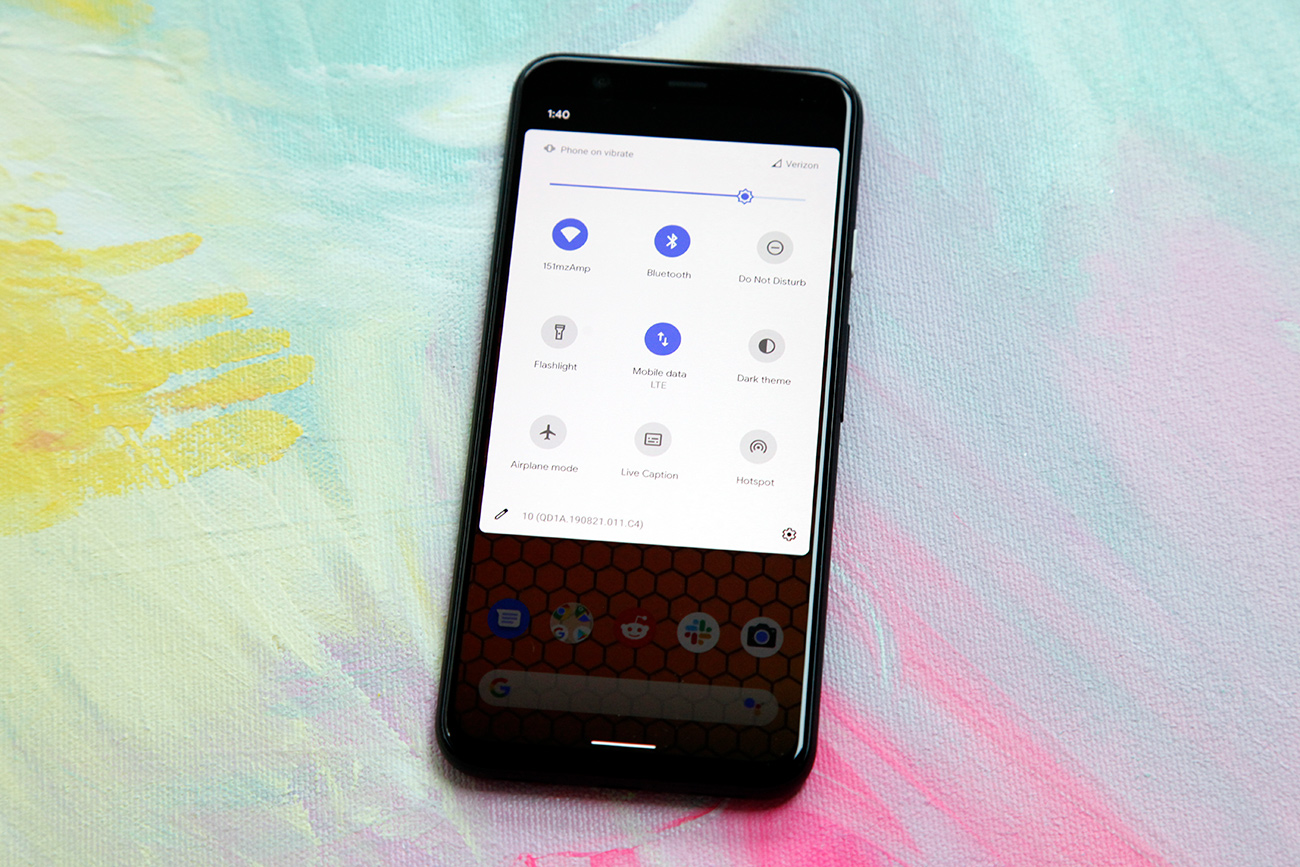

Versatile Quick Settings menu

The Control Center in iOS is terrific compared to not having anything at all, but it’s not all that great compared to the Quick Settings menu on Google’s Pixel 4 and Pixel 4 XL. For one thing, you can rearrange tiles on the Pixel 4 right from within the Quick Settings menu instead of having to dig through the phone’s main Settings app. But the kicker is that there’s support for third-party tiles on the Pixel phones! Other Android phones work the same way, and it would be terrific if iPhones did as well.

Photos with bright areas and dark shadows

Honestly, I was shocked last month when I saw how much better Apple’s new Night mode is on the iPhone 11 series than Night Sight on Google’s Pixel phones. Even more shocking is the fact that even with the Pixel 4’s improvements, it’s still no match for what Apple has achieved. The iPhone 11’s camera is also better all around than the Pixel 4, as we’ve seen in countless comparisons, but there’s one type of scene in particular where the Pixel 4 outshines the iPhone. As you can see in a comparison we linked last week, the Pixel 4 takes much better phots when parts of the frame are brightly lit and other parts of the frame have dark shadows.

Dual exposure controls

The Pixel 4’s camera does a great job accommodating different types of lighting on its own, but the real fun starts when you begin making some manual adjustments. Tap anywhere in the frame while capturing a photo and instead of getting one slider to adjust exposure, as you see on other smartphones, you get two. One adjusts the brightness of highlights while the other adjusts the brightness of shadows. By gaining the ability to fine-tune both, you end up with the ability to capture the exact shot you want.

Zoom

There’s one more area where Google’s new Pixel 4 camera beats Apple’s new iPhone 11 and iPhone 11 Pro cameras, and it’s actually the area with what I believe is the widest margin in Google’s favor. Put simply, the Pixel 4’s new and improved Super Res Zoom feature is fantastic. This AI-assisted feature captures multiple photos in rapid succession before and after you tap the shutter button when zooming. It then pulls data from all of the photos and stitches them together with impressive clarity.

Last year’s Pixel 3 series phones have a first-generation version of Super Res Zoom, but the new update is a totally different beast. Believe it or not, the image above was taken with the Pixel 4’s camera at 8x zoom, and you can click to enlarge it and see the impressive clarity.

Car crash detection

Device makers continue to come up with impressive new ways to provide assistance to users in need. Apple’s big new emergency assistance feature is fall detection on the Apple Watch, but the iPhone has plenty of safety features as well. One thing it doesn’t have, however, is the Pixel 4’s new car crash detection.

Using the phone’s sensors, car crash detection can determine when you’ve been in an accident. In the event of a collision, the phone will vibrate and sound a loud alarm. If you don’t manually disable the alarm within a certain amount of time, the Pixel 4 will call 911 on its own and attempt to provide emergency services with your location.

Big, ugly, asymmetrical bezels

Just… no.