I Think Microsoft Security Copilot Might Be The Best Use Of ChatGPT Yet

Since ChatGPT's arrival, we've seen plenty of mind-blowing use cases for the generative AI software that will change how we work. But I think the Microsoft Security Copilot that Microsoft just announced might be one of the best, if not the best product enabled by ChatGPT. As the name indicates, Microsoft Security Copilot is a product that will improve online security.

That might sound boring to most people. And Security Copilot will not be available to regular consumers since it targets enterprise clients. But you know what's not boring? Security breaches. They happen all the time, and they can have disastrous consequences.

The only way to defend against them is to employ best practices while online and stay informed about the newest threats. That also sounds tedious and cumbersome. And even then, you might not be completely safe, as hackers can exploit security issues in products that IT departments are yet to patch.

That's where products like Microsoft Security Copilot will come in handy. The ChatGPT-based security product will significantly improve the response times to online threats, allowing understaffed security teams to put up better defenses. In turn, internet users who never even have to interact with Security Copilot will benefit from much better security than before.

The more organizations that use Security Copilot or similar products, the better the overall security should be. This could lead to fewer data breaches, as AI might catch attackers before they penetrate a company's defenses. ChatGPT-powered security software could also speed up the development of security updates that patch newly discovered exploits.

Security Copilot doesn't rely solely on ChatGPT's GPT-4 language model to get the job done. It also employs a security-specific model from Microsoft. The latter "incorporates a growing set of security-specific skills and is informed by Microsoft's unique global threat intelligence and more than 65 trillion daily signals," according to the company.

Security Copilot won't put ChatGPT in the driver's seat when defending a company against cyber threats. We're not quite there yet. The security product will act as an assistant to security experts in charge of IT defenses. But Security Copilot might significantly improve how security experts deal with threats in real time.

The ChatGPT-based software solution will continuously learn from users. But an organization's data will remain private. Microsoft says it will not use the data to train the foundation AI models. Users in the same organization can share interactions with the Security Copilot while dealing with a threat. And the system will keep a record of each interaction, which can be retrieved later when analyzing an investigation.

Microsoft also warns that Security Copilot might sometimes deliver inaccurate information as it assesses threats. But IT department members will be able to report errors as they catch them. As a reminder, ChatGPT can make mistakes, so any product incorporating the language model would be prone to some factual issues.

That said, Security Copilot looks like a massive upgrade, putting ChatGPT to great use.

Microsoft says that Security Copilot should respond to security incidents within minutes instead of hours or days. That way, you might not have to wait months to learn how and why your LastPass account was hacked.

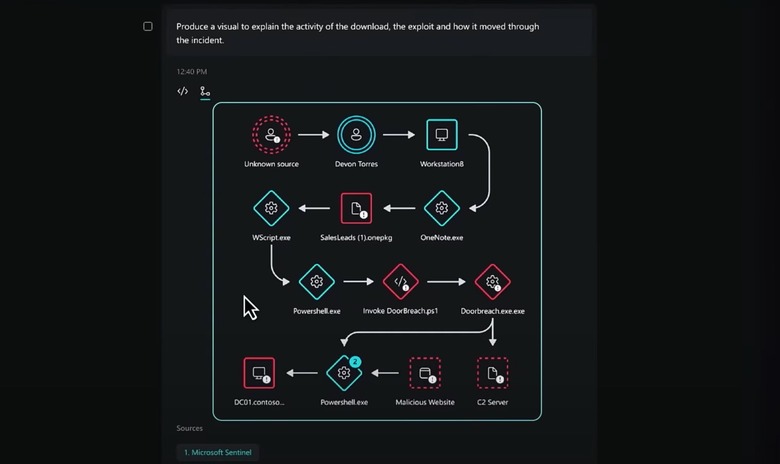

The AI product could also catch hidden malicious behavior that humans might miss. And it can even reverse-engineer exploits it detects, as seen in the video above.

Security Copilot will also be able to rapidly generate reports and presentations about active investigations, which would be available immediately inside an organization.

All of that will significantly speed up response times to security threats. And that's how ChatGPT will protect your online security before you even have to worry about buying and/or installing a Security Copilot product on your devices. I think that's a massive security upgrade that Microsoft is deploying, one we'll hopefully take for granted in the near future, despite the obvious Terminator vibes such security products might give.

That said, it's unclear how much Security Copilot would cost a company looking to bolster its online security with the help of ChatGPT. But it sure looks like the kind of security product IT departments should consider buying right away.

While we're at it, the EU's Europol highlighted the ChatGPT features that can help malicious actors earlier this week. Hackers can use the generative AI to create better phishing campaigns and code malicious sites and apps. Microsoft's Security Copilot comes right in time for such attacks. The best way to beat security threats created with the help of AI is AI.