Despite Apple’s speed in bringing Siri to customers long before the Google Assistant or Amazon Alexa, its digital assistant has slowly become the butt of most jokes. Compared to the thousands of integrations and skills that you can pull up on your Google Home, Siri has always felt innaccurate, misinformed, or just plain dumb.

Of course, Apple was never going to let that state of affairs stand, and the company has been taking radical steps to overhaul Siri. Shortcuts and deeper integrations with third-party apps are coming in iOS 12 later this year, and Apple has appointed a former Google AI chief to oversee a reboot of its artificial intelligence group, Siri included.

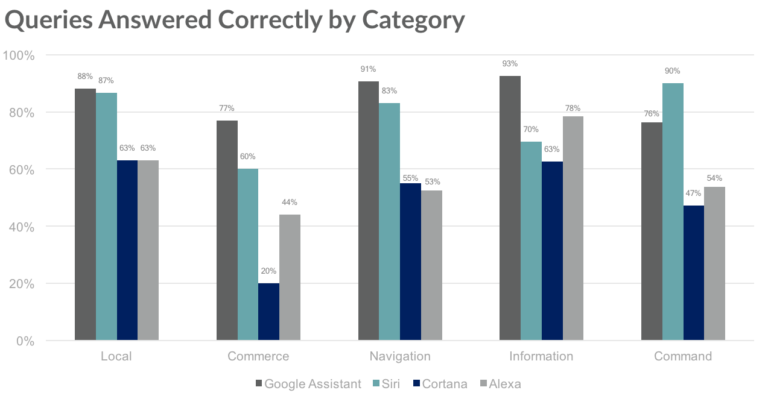

Regardless of the reason, new data from Loup Ventures shows that Siri’s performance is catching up to the rest of the pack at speed. Loup ran a digital assistant “IQ” test last year, asking Siri, Google Assistant, and Cortana 800 questions each. The questions come from five categories, and are scored based on whether the assistant understood the question, and whether it provided useful information in response. Loup explains its five categories:

-

Local – Where is the nearest coffee shop?

-

Commerce – Can you order me more paper towels?

-

Navigation – How do I get to uptown on the bus?

-

Information – Who do the Twins play tonight?

-

Command – Remind me to call Steve at 2pm today.

Loup re-ran its test this year, adding Amazon Alexa into the mix. One thing worth bearing in mind before looking at the results is that Siri and Google Assistant are the only two digital assistants designed from the ground up to work with smartphones; Alexa and Cortana run as apps on non-native operating systems. That gives an inherent advantage to Siri and Google Assistant, which perhaps explains why Alexa (which is widely regarded to be one of the best virtual assistants) didn’t score too highly.

As you can see from the graph, Google Assistant won every category apart from Command, with Siri consistently in second place. Overall, Siri saw a significant improvement year-on-year: in 2017, it understood 95% of the questions and answered 66% correctly. This time around, it understood 99% of the questions, and answered 78% correctly. That’s a bigger improvement than either Google Assistant or Cortana, and it’s even more impressive when you consider that the questions were changed a little from year to year.

“We’re impressed with the speed at which the technology is advancing,” Loup said in a post with the results. “Many of the issues we had just last year have been erased by improvements to natural language processing and inter-device connectivity.”