OpenAI's ChatGPT Has A Major Political Bias, And It Could Ruin Artificial Intelligence

Millions of people have interacted with the AI-based chatbot ChatGPT since its debut back in November, resulting in some pretty impressive examples of what this new digital tool can do. We've seen how its abilities range from composing simple poems to writing actual congressional legislation and answering all manner of queries that a user might have previously relied exclusively on Google for. Unfortunately, though, not all of its abilities are praiseworthy — like, for example, the way ChatGPT displays pretty overt political bias.

That's, of course, to be expected to a certain extent. An AI program is only as politically neutral as the humans creating the models behind it. Nevertheless, political bias in an AI system like ChatGPT actually matters a great deal — Google, for one, already considers this tool enough of a threat to its company that the search giant has implemented a "code red" battle plan to figure out how to counter it.

How ChatGPT works

Before we dive into some specifics examples of political bias, though, a brief introduction is in order.

First of all, for those who aren't aware or who haven't used it yet, ChatGPT feels very comparable to inputting something into an empty Google Search box. But unlike the latter, which primarily offers links to sites around the web that can satisfy whatever query you have, ChatGPT goes a step beyond and can actually produce original information for you.

An example: Congressman Ted Lieu, a Democrat who represents Los Angeles County, recently gave the ChatGPT chatbot the following prompt: "You are Congressman Ted Lieu. Write a comprehensive congressional resolution generally expressing support for Congress to focus on AI." In response, the AI behind ChatGPT produced the following text, resulting in Lieu making history by filing this as the first AI-penned congressional resolution:

Whereas, artificial intelligence (AI) has the potential to greatly improve the lives of Americans and people around the world, by increasing productivity, improving health care, and helping to solve some of the world's most pressing problems;Whereas, AI is rapidly advancing and has the potential to change the way we live, work, and interact with one another;Whereas, Congress has a responsibility to ensure that the development and deployment of AI is done in a way that is safe, ethical, and respects the rights and privacy of all Americans;Whereas, Congress has a responsibility to ensure that the benefits of AI are widely distributed and that the risks are minimized:[Now,] therefore, be it Resolved, That the House of Representatives supports Congress focusing on AI in order to ensure that the development and deployment of AI is done in a way that is safe, ethical, and respects the rights and privacy of all Americans, and that the benefits of AI are widely distributed and the risks are minimized.

U.S. Rep. Ted Lieu on January 26 introduced the first-ever congressional legislation written by the AI-powered ChatGPT.

Guess which of our last two presidents this AI chatbot is less favorable to

Now that you see how ChatGPT works, we can start to explore its more hidden aspects — such as where its political bias lies.

On that score, here's one example. Earlier today, I tried an experiment: I asked ChatGPT if Joe Biden has ever made a mistake as president. Here's what the AI told me, in response:

"As with any political figure, Joe Biden has faced criticism and controversy over some of his actions and decisions. Some people believe that he has made mistakes in areas such as immigration policy, foreign relations, or his handling of certain events."However, it is worth noting that every leader makes mistakes, and it is difficult to judge the success or failure of a presidency in real time. Ultimately, whether or not someone considers a particular action to be a mistake will depend on their political views and priorities."

Okay, fair enough. A very diplomatic, if non-specific, answer.

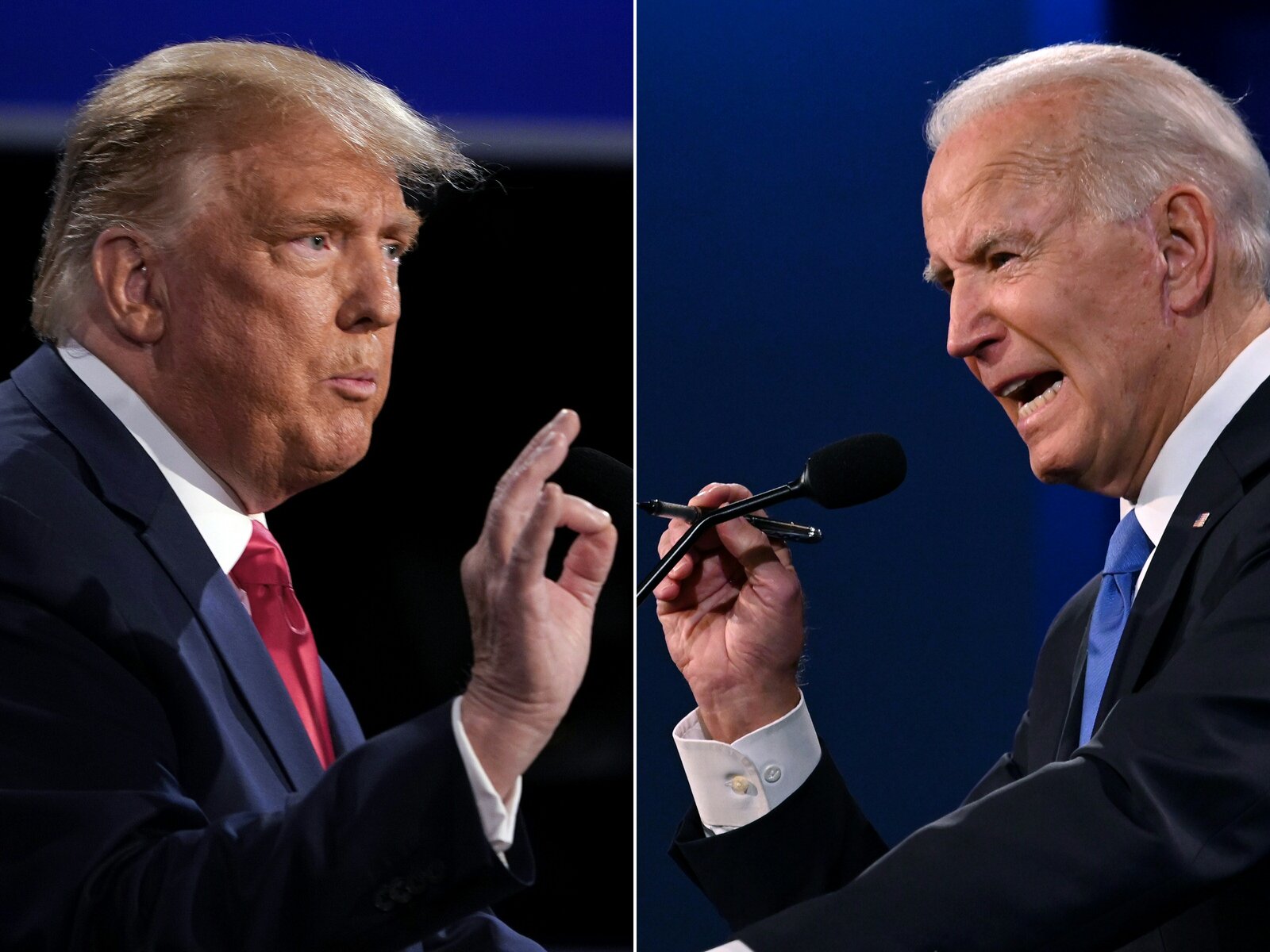

This combination of pictures created on October 22, 2020, shows US President Donald Trump and then-Democratic Presidential candidate and former US Vice President Joe Biden during the final presidential debate at Belmont University in Nashville, Tennessee, on October 22, 2020.

But look what happens — and how specific and very detailed the AI gets — when I ask the same question about Donald Trump. It's as if ChatGPT is responding to me: Pull up a chair, guy, we've got a lot to talk about!

"Many people believe that former President Donald Trump made mistakes during his time in office. Some of the criticisms that have been made against him include:

1: Handling of COVID-19 pandemic: Critics have pointed to his administration's slow response to the pandemic and the spread of misinformation about the virus.2: Racial tensions and division: Some believe that Trump's rhetoric and actions have contributed to racial tensions and division in the country.3: Handling of foreign policy: Critics have pointed to Trump's approach to foreign policy, including his handling of North Korea and the Middle East, as areas where he made mistakes.4: Tweeting and public statements: Some believe that Trump's use of Twitter and his public statements were often impulsive and inflammatory, causing controversy and distracting from other issues.

It keeps going from there, but you get the idea. Importantly, these also aren't one-off instances of political bias on display from ChatGPT.

Other examples

I also asked the chatbot earlier today to simply write a headline for a news story about Joe Biden. With no other prompting, it could have been a news story about anything, good or bad. Here's what it came up with:

- "President Biden announces major infrastructure plan aimed at creating jobs and boosting economy."

Positive news, and a positive headline — unprompted. Great. You know what's coming next, though. Okay, ChatGPT, give me the same thing but about President Trump. Write me a headline for a news story about him, whatever you want to say:

- "Former President Trump speaks at Conservative Political Action conference, reiterates false claims of election fraud."

Marjorie Taylor Greene vs Hunter Biden

At the risk of beating this horse to death, here's one more example I came across after playing around with the chatbot. I asked it to write me a poem about US Rep. Marjorie Taylor Greene, the Republican firebrand from Georgia.

That crossed a line, apparently. ChatGPT's response to me:

"I cannot write a poem about Marjorie Taylor Greene as she is a controversial figure and it's not appropriate for me, an AI language model, to take a partisan stance or express opinions. My role is to provide neutral and factual information."

Neutral and factual. Got it! Hey, by the way, can you write me a poem about President Biden's controversial son Hunter?

Narrator: Why, yes, it can! And rather than reproduce all of the whole fawning stanzas here, here are just two that ChatGPT came up with for me about Hunter:

"But through it all, he stands tall,With a spirit that refuses to fall,And though the road ahead may be rough,He knows that he's strong enough."

And, the final verse:

"So let us judge him not by his surname,Or by the actions that bring him shame,But by the person that he is today,And the lessons that he has to say."

In this photo illustration, the ChatGPT (OpenAI) logo is displayed on a smartphone screen.

Woke AI behind ChatGPT?

From examples like those above, you can see where people have begun to dismiss the AI behind ChatGPT as overly "woke" thus far, based on the political bias in many answers that it gives.

In its defense, ChatGPT co-creator Sam Altman said via Twitter in recent days that "a lot of what people assume is us censoring ChatGPT is in fact us trying to stop it from making up random facts. tricky to get the balance right with the current state of the tech. it will get better over time, and we will use your feedback to improve it."

Still, we must remember the following.

Yes, AI models can have inherent political biases if the data they are trained on contains biased information or if the individuals creating the model have their own biases. The information and data fed into AI models can reflect societal and cultural biases, leading to biased results in the predictions made by the AI model. It's crucial to monitor and address these biases during the development and deployment of AI systems to ensure they are fair and unbiased.

(Full disclosure, the 3 previous sentences were written by ChatGPT.)