Bing Chat Ads Will Start The ChatGPT Privacy Discussion We Desperately Need

It has finally happened: a ChatGPT-based product is getting ads. That's Bing Chat, of course, and nobody should act surprised. It was only a matter of time until someone monetized a generative AI product with ads. As a reminder, OpenAI is doing it with a ChatGPT Plus monthly subscription. But the arrival of ads on Bing Chat is actually great for a different reason. It'll hopefully open the ChatGPT privacy talk we desperately need.

As exciting as generative AI might be, let's all remember these language models gobble up large amounts of data, including user data. Companies like OpenAI, Microsoft, and Google will need all that data to train the AI models. But what happens to our data while we chat with AI via ChatGPT, Bing Chat, or Bard is still unclear. And how that data will impact the personalized ads experience.

The last thing I want is an AI product to remember everything I chat about and then deliver incredibly targeted ads in its responses. And I don't even want to imagine a future where AI products would be preprogrammed to drive conversations toward product placements and ad clicks.

Microsoft announced Bing Chat getting ads earlier this week without detailing the privacy implications for the end user. Microsoft focused on the fact that ChatGPT-like products can send more traffic to publishers. And Microsoft itself wants to send more traffic to publishers via Bing Chat:

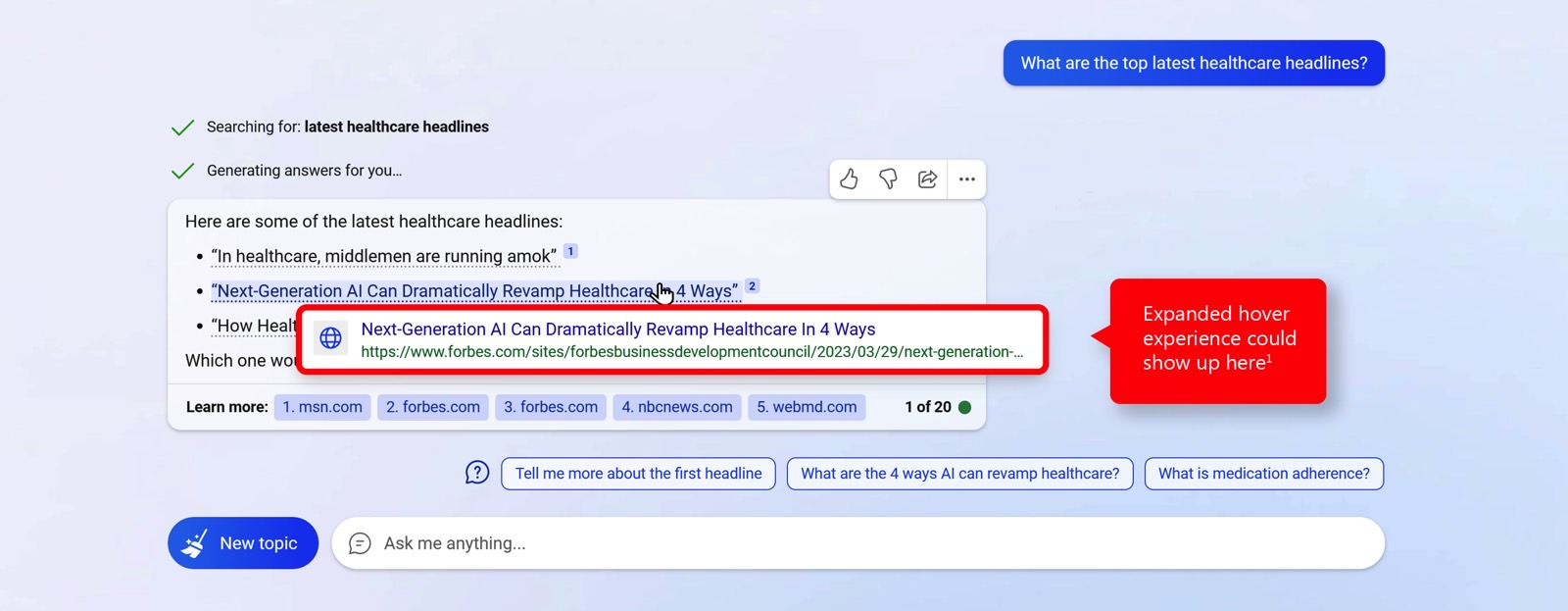

Let's start with our goals. First, we want to drive more traffic to publishers in this new world of search. It is a top goal for us, and we measure success in part by how much traffic we are sending from the new Bing/Edge. Second, we want to increase revenue to publishers. We seek to do this by both driving more traffic to them through new features like chat and answers and by also pioneering the future of advertising in these new mediums as I will describe below. Lastly, we want to go about this in collaborative fashion working with the industry to continue to foster a healthy ecosystem.

The early progress is encouraging. Based on our data from the preview, we are driving more traffic from all types of users. We have brought more people to Bing/Edge for new scenarios like chat and we are seeing increased usage. Then, we have uniquely implemented ways to drive traffic to publishers including citations within the body of the chat answers that are linked to sources as well as citations below the chat results to "learn more" with links to additional sources.

But Microsoft fails to address user privacy in the blog post. The topic doesn't even come up, which I find strange considering all the focus on privacy. Every tech company out there is stressing the importance of user privacy when talking about new products. And user privacy comes up regularly when companies like Google detail new initiatives to track users online more privately.

Compare Microsoft's Bing Chat ads blog announcement with the Microsoft Secure Copilot reveal. The latter made it clear that user data doesn't leave an organization's servers.

User privacy should be at the front and center of generative AI. Especially considering the massive upgrades ChatGPT is getting. Unlike Elon Musk and others, I'm not worried about AI taking over the world anytime soon. But I wouldn't want the generative AI chatbot I chat with to harvest information about me that could build an incredibly detailed profile for targeted ads.

A few weeks ago, we learned that Microsoft can read Bing Chat interactions. Even if stripped of personal data, this is still the sort of privacy-infringing activity you might not be aware of when dealing with AI — The Telegraph reported on the matter. This is reminiscent of tech companies listening to voice recordings from commands users issued to smart assistants on phones and smart speakers.

OpenAI might not include ads in ChatGPT(yet), but ChatGPT also appears to be a privacy nightmare. OpenAI uses hundreds of billions of words to train its language model, including yours. It seems to disregard copyright protections as it scrapes data off the internet. And that data also includes the stuff you feed it. It's unclear what happens with your data and whether you'll ever be able to remove your interactions from ChatGPT.

As Gizmodo pointed out recently, OpenAI also gathers plenty of metadata, including "IP address, browser type and settings, and data on users' interactions with the site – including the type of content users engage with, features they use and actions they take." And OpenAI also collects your browsing activities over time and across website. Then, some of that data might be shared with unspecified third parties without your knowledge.

And don't even get me started on Google Bard. When Google's generative AI is up and running in earnest, that's when more users and regulators will start looking into the privacy implications. Google already has a support document up explaining how to delete your Google Bard data and turn off data collection. On that note, Microsoft lets you delete your Bing Chat data as well. But the privacy protections should be a lot clearer.

Of course, language models like ChatGPT need complex training to offer awesome features. And we have to pay for the technology, either via ads, one-time fees, or subscriptions. But this doesn't change the fact that we need clear privacy policies in place for ChatGPT-like products. Chatbots that might one day truly remember everything about us.