I’ve been using iOS 11 for almost a month now. As soon as Apple released the first beta, I installed it on my iPhone, without backing up my previous iOS 10.3 beta, because I like living dangerously. But since then, I haven’t looked back. iOS 11, however, isn’t perfect in beta form, and you’ll encounter various glitches and bugs that should be ironed out by September.

But it turns out that something I cataloged as a bug might actually be an iOS 11 feature that heralds good news about the iPhone 8’s iconic feature.

Apple is going to make an all-screen iPhone this year, the device we currently call iPhone 8. The phone will have a few features that are already available on various Android devices or previous iPhones — a bezel-free screen, wireless charging, a dual lens camera, 3D depth sensors, and a glass sandwich design. But there is one feature that won’t be available on any other smartphone this year: a Touch ID sensor integrated into the display.

Wait, didn’t an Apple insider just say that the Touch ID sensor won’t be placed inside the layers of the screen? Yes, he did, and he may be right. Partially.

Let’s get back to my iOS 11 bug. Multitasking on an iPhone with 3D Touch can be performed in two ways. You either press the home button twice, or you apply pressure on the screen near the left edge (3D Touch). One of them does not work in iOS 11, and I thought it was a bug that needs fixing. Instead, it’s intentional.

Apple already confirmed that the 3D Touch multitasking gesture isn’t broken in iOS 11. It was removed intentionally.

Apple engineering has confirmed that 3D Touch multitasking was intentionally removed in iOS 11. I am livid. pic.twitter.com/kiCcLq9XMB

— Bryan Irace (@irace) June 30, 2017

Why did Apple do it? After all, there must be some iPhone 6s an iPhone 7 owners that use it, including yours truly and Bryan Irace.

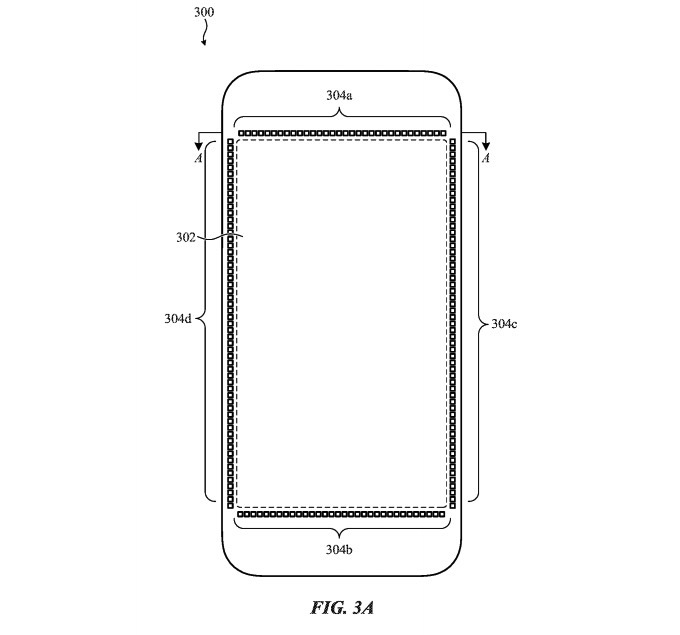

There doesn’t seem to be any explanation for this particular move unless you’ve read this Apple patent (see the following image as well).

Remember all the reports that said the Touch ID sensor will die with the iPhone 8, including Ming-Chi Kuo’s most recent predictions? That may still be right. The old Touch ID might die. The way Touch ID works is by taking a high-resolution image of the fingerprint and comparing it with the data stored in the secure enclave of the chip.

Placing such a sensor in the screen is a challenging task, which is why Apple might not do it, and why Kuo and others might be right.

But that doesn’t mean the screen will not register the fingerprints using a different kind of technology.

The patent I mentioned calls for using acoustic sensors placed around the display’s edges that will emit and interpret acoustic waves to read fingerprints. Now, if these acoustic sensors need to be placed along the edges of the screen, then maybe something else needs to be sacrificed, like 3D Touch functionality around the edges of the screen.

There’s no evidence to link the removal of this 3D Touch gesture with the introduction of a new Touch ID fingerprint reading tech. But Apple may be toying with a novel Touch ID technology for the iPhone 8, one that’s not easy to deploy. Recent reports said that Samsung is experiencing yields problems with the OLED displays it makes for the iPhone. Since Samsung is the king of OLED screens for smartphones right now, that can only be interpreted as a sign that Apple’s OLED screen needs are more complex than Samsung’s. Including any kind of sensors, whether they’re traditional Touch ID, ultrasound, or acoustic sensors in the display would likely turn the manufacturing process into an even more laborious task.

I’m just speculating at this time, and the simpler explanation might be that Apple has developed a different way to activate multitasking on the iPhone using 3D Touch, which will be shown come the next generation of handset. What Apple can’t do is eliminate the multitasking gesture for good. The home button may be going away, but that gesture is integral to one’s iPhone experience. One way or the other, multitasking has to exist on the iPhone, and it has to be related to 3D Touch especially on the iPhone 8 that won’t have a physical home button.